2023

Garcia, Rosalinda; Patricia Morreale; Lara Letaw; Amreeta Chatterjee; Pakati Patel; Sarah Yang; Isaac Tijerina Escobar; Geraldine Jimena Noa;; Margaret Burnett

“Regular” CS × Inclusive Design = Smarter Students and Greater Diversity textbar ACM Transactions on Computing Education Journal Article

In: ACM Transactions on Computing Education, 2023.

Links | BibTeX | Tags: Human-Computer Interaction

@article{noauthor_regular_nodate,

title = {“Regular” CS × Inclusive Design = Smarter Students and Greater Diversity textbar ACM Transactions on Computing Education},

author = {Garcia, Rosalinda; Patricia Morreale; Lara Letaw; Amreeta Chatterjee; Pakati Patel; Sarah Yang; Isaac Tijerina Escobar; Geraldine Jimena Noa; and Margaret Burnett},

url = {https://dl.acm.org/doi/10.1145/3603535},

year = {2023},

date = {2023-07-22},

urldate = {2023-07-22},

journal = {ACM Transactions on Computing Education},

keywords = {Human-Computer Interaction},

pubstate = {published},

tppubtype = {article}

}

2022

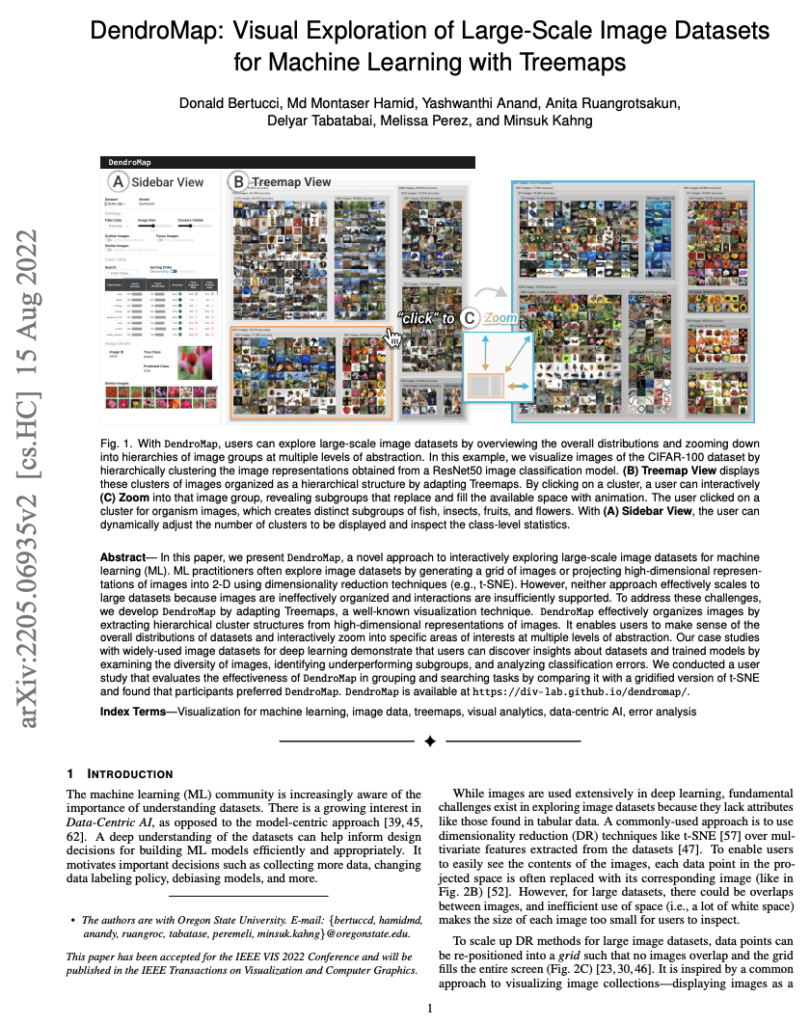

Donald Bertucci; Md Montaser Hamid; Yashwanthi Anand; Anita Ruangrotsakun; Delyar Tabatabai; Melissa Perez; Minsuk Kahng

DendroMap: Visual Exploration of Large-Scale Image Datasets for Machine Learning with Treemaps Journal Article

In: IEEE Transactions on Visualization and Computer Graphics (IEEE VIS 2022 Conference), 2022.

Abstract | Links | BibTeX | Tags: AI, Human-Computer Interaction

@article{bertucci_dendromap_2022,

title = {DendroMap: Visual Exploration of Large-Scale Image Datasets for Machine Learning with Treemaps},

author = { Donald Bertucci and Md Montaser Hamid and Yashwanthi Anand and Anita Ruangrotsakun and Delyar Tabatabai and Melissa Perez and Minsuk Kahng},

url = {https://ieeexplore.ieee.org/document/9904448},

doi = {10.1109/TVCG.2022.3209425},

year = {2022},

date = {2022-08-01},

urldate = {2022-08-01},

journal = {IEEE Transactions on Visualization and Computer Graphics (IEEE VIS 2022 Conference)},

publisher = {arXiv},

abstract = {In this paper, we present DendroMap, a novel approach to interactively exploring large-scale image datasets for machine learning (ML). ML practitioners often explore image datasets by generating a grid of images or projecting high-dimensional representations of images into 2-D using dimensionality reduction techniques (e.g., t-SNE). However, neither approach effectively scales to large datasets because images are ineffectively organized and interactions are insufficiently supported. To address these challenges, we develop DendroMap by adapting Treemaps, a well-known visualization technique. DendroMap effectively organizes images by extracting hierarchical cluster structures from high-dimensional representations of images. It enables users to make sense of the overall distributions of datasets and interactively zoom into specific areas of interests at multiple levels of abstraction. Our case studies with widely-used image datasets for deep learning demonstrate that users can discover insights about datasets and trained models by examining the diversity of images, identifying underperforming subgroups, and analyzing classification errors. We conducted a user study that evaluates the effectiveness of DendroMap in grouping and searching tasks by comparing it with a gridified version of t-SNE and found that participants preferred DendroMap. DendroMap is available at https://div-lab.github.io/dendromap/.},

howpublished = {IEEE VIS 2022 Conference and will be published in the IEEE Transactions on Visualization and Computer Graphics},

keywords = {AI, Human-Computer Interaction},

pubstate = {published},

tppubtype = {article}

}

Mariam Guizani; Igor Steinmacher; Jillian Emard; Abrar Fallatah; Margaret Burnett; Anita Sarma

How to Debug Inclusivity Bugs? A Debugging Process with Information Architecture Proceedings Article

In: 2022 IEEE/ACM 44th International Conference on Software Engineering: Software Engineering in Society (ICSE-SEIS), pp. 90–101, IEEE Computer Society, 2022, ISBN: 978-1-66549-594-3.

Abstract | Links | BibTeX | Tags: Human-Computer Interaction

@inproceedings{guizani_how_2022,

title = {How to Debug Inclusivity Bugs? A Debugging Process with Information Architecture},

author = { Mariam Guizani and Igor Steinmacher and Jillian Emard and Abrar Fallatah and Margaret Burnett and Anita Sarma},

url = {https://www.computer.org/csdl/proceedings-article/icse-seis/2022/959400a090/1EmrhU2EkcE},

doi = {10.1109/ICSE-SEIS55304.2022.9794009},

isbn = {978-1-66549-594-3},

year = {2022},

date = {2022-05-01},

urldate = {2022-05-01},

booktitle = {2022 IEEE/ACM 44th International Conference on Software Engineering: Software Engineering in Society (ICSE-SEIS)},

pages = {90--101},

publisher = {IEEE Computer Society},

abstract = {Although some previous research has found ways to find inclusivity bugs (biases in software that introduce inequities), little attention has been paid to how to go about fixing such bugs. Without a process to move from finding to fixing, acting upon such findings is an ad-hoc activity, at the mercy of the skills of each individual developer. To address this gap, we created Why/Where/Fix, a systematic inclusivity debugging process whose inclusivity fault localization harnesses Information Architecture(IA)-the way user-facing information is organized, structured and labeled. We then conducted a multi-stage qualitative empirical evaluation of the effectiveness of Why/Where/Fix, using an Open Source Software (OSS) project\'s infrastructure as our setting. In our study, the OSS project team used the Why/Where/Fix process to find inclusivity bugs, localize the IA faults behind them, and then fix the IA to remove the inclusivity bugs they had found. Our results showed that using Why/Where/Fix reduced the number of inclusivity bugs that OSS newcomer participants experienced by 90\%. Diverse teams have been shown to be more productive as well as more innovative. One form of diversity, cognitive diversity - differences in cognitive styles - helps generate diversity of thoughts. However, cognitive diversity is often not supported in software tools. This means that these tools are not inclusive of individuals with different cognitive styles (e.g., those who like to learn through process vs. those who learn by tinkering), which burdens these individuals with a cognitive \“tax\” each time they use the tool. In this work, we present an approach that enables software developers to: (1) evaluate their tools, especially those that are information-heavy, to find \“inclusivity bugs\”- cases where diverse cognitive styles are unsupported, (2) find where in the tool these bugs lurk, and (3) fix these bugs. Our evaluation in an open source project shows that by following this approach developers were able to reduce inclusivity bugs in their projects by 90\%.},

keywords = {Human-Computer Interaction},

pubstate = {published},

tppubtype = {inproceedings}

}

Roli Khanna; Jonathan Dodge; Andrew Anderson; Rupika Dikkala; Jed Irvine; Zeyad Shureih; Kin-Ho Lam; Caleb R. Matthews; Zhengxian Lin; Minsuk Kahng; Alan Fern; Margaret Burnett

Finding AI’s Faults with AAR/AI: An Empirical Study Journal Article

In: ACM Transactions on Interactive Intelligent Systems, vol. 12, no. 1, pp. 1:1–1:33, 2022, ISSN: 2160-6455.

Abstract | Links | BibTeX | Tags: AI, Human-Computer Interaction

@article{khanna_finding_2022,

title = {Finding AI’s Faults with AAR/AI: An Empirical Study},

author = { Roli Khanna and Jonathan Dodge and Andrew Anderson and Rupika Dikkala and Jed Irvine and Zeyad Shureih and Kin-Ho Lam and Caleb R. Matthews and Zhengxian Lin and Minsuk Kahng and Alan Fern and Margaret Burnett},

url = {https://doi.org/10.1145/3487065},

doi = {10.1145/3487065},

issn = {2160-6455},

year = {2022},

date = {2022-03-01},

urldate = {2022-03-01},

journal = {ACM Transactions on Interactive Intelligent Systems},

volume = {12},

number = {1},

pages = {1:1--1:33},

abstract = {Would you allow an AI agent to make decisions on your behalf? If the answer is “not always,” the next question becomes “in what circumstances”? Answering this question requires human users to be able to assess an AI agent\textemdashand not just with overall pass/fail assessments or statistics. Here users need to be able to localize an agent’s bugs so that they can determine when they are willing to rely on the agent and when they are not. After-Action Review for AI (AAR/AI), a new AI assessment process for integration with Explainable AI systems, aims to support human users in this endeavor, and in this article we empirically investigate AAR/AI’s effectiveness with domain-knowledgeable users. Our results show that AAR/AI participants not only located significantly more bugs than non-AAR/AI participants did (i.e., showed greater recall) but also located them more precisely (i.e., with greater precision). In fact, AAR/AI participants outperformed non-AAR/AI participants on every bug and were, on average, almost six times as likely as non-AAR/AI participants to find any particular bug. Finally, evidence suggests that incorporating labeling into the AAR/AI process may encourage domain-knowledgeable users to abstract above individual instances of bugs; we hypothesize that doing so may have contributed further to AAR/AI participants’ effectiveness.},

keywords = {AI, Human-Computer Interaction},

pubstate = {published},

tppubtype = {article}

}

Jonathan Dodge; Andrew A. Anderson; Matthew Olson; Rupika Dikkala; Margaret Burnett

How Do People Rank Multiple Mutant Agents? Proceedings Article

In: 27th International Conference on Intelligent User Interfaces, pp. 191–211, Association for Computing Machinery, New York, NY, USA, 2022, ISBN: 978-1-4503-9144-3.

Abstract | Links | BibTeX | Tags: AI, Human-Computer Interaction

@inproceedings{dodge_how_2022,

title = {How Do People Rank Multiple Mutant Agents?},

author = { Jonathan Dodge and Andrew A. Anderson and Matthew Olson and Rupika Dikkala and Margaret Burnett},

url = {https://doi.org/10.1145/3490099.3511115},

doi = {10.1145/3490099.3511115},

isbn = {978-1-4503-9144-3},

year = {2022},

date = {2022-03-01},

urldate = {2022-03-01},

booktitle = {27th International Conference on Intelligent User Interfaces},

pages = {191--211},

publisher = {Association for Computing Machinery},

address = {New York, NY, USA},

series = {IUI '22},

abstract = {Faced with several AI-powered sequential decision-making systems, how might someone choose on which to rely? For example, imagine car buyer Blair shopping for a self-driving car, or developer Dillon trying to choose an appropriate ML model to use in their application. Their first choice might be infeasible (i.e., too expensive in money or execution time), so they may need to select their second or third choice. To address this question, this paper presents: 1) Explanation Resolution, a quantifiable direct measurement concept; 2) a new XAI empirical task to measure explanations: “the Ranking Task”; and 3) a new strategy for inducing controllable agent variations\textemdashMutant Agent Generation. In support of those main contributions, it also presents 4) novel explanations for sequential decision-making agents; 5) an adaptation to the AAR/AI assessment process; and 6) a qualitative study around these devices with 10 participants to investigate how they performed the Ranking Task on our mutant agents, using our explanations, and structured by AAR/AI. From an XAI researcher perspective, just as mutation testing can be applied to any code, mutant agent generation can be applied to essentially any neural network for which one wants to evaluate an assessment process or explanation type. As to an XAI user’s perspective, the participants ranked the agents well overall, but showed the importance of high explanation resolution for close differences between agents. The participants also revealed the importance of supporting a wide diversity of explanation diets and agent “test selection” strategies.},

keywords = {AI, Human-Computer Interaction},

pubstate = {published},

tppubtype = {inproceedings}

}

Amreeta Chatterjee; Lara Letaw; Rosalinda Garcia; Doshna Umma Reddy; Rudrajit Choudhuri; Sabyatha Sathish Kumar; Patricia Morreale; Anita Sarma; Margaret Burnett

Inclusivity Bugs in Online Courseware: A Field Study Proceedings Article

In: Proceedings of the 2022 ACM Conference on International Computing Education Research - Volume 1, pp. 356–372, Association for Computing Machinery, New York, NY, USA, 2022, ISBN: 978-1-4503-9194-8.

Abstract | Links | BibTeX | Tags: Education, Human-Computer Interaction

@inproceedings{chatterjee_inclusivity_2022,

title = {Inclusivity Bugs in Online Courseware: A Field Study},

author = { Amreeta Chatterjee and Lara Letaw and Rosalinda Garcia and Doshna Umma Reddy and Rudrajit Choudhuri and Sabyatha Sathish Kumar and Patricia Morreale and Anita Sarma and Margaret Burnett},

url = {https://doi.org/10.1145/3501385.3543973},

doi = {10.1145/3501385.3543973},

isbn = {978-1-4503-9194-8},

year = {2022},

date = {2022-01-01},

urldate = {2022-01-01},

booktitle = {Proceedings of the 2022 ACM Conference on International Computing Education Research - Volume 1},

pages = {356--372},

publisher = {Association for Computing Machinery},

address = {New York, NY, USA},

series = {ICER '22},

abstract = {Motivation: Although asynchronous online CS courses have enabled more diverse populations to access CS higher education, research shows that online CS-ed is far from inclusive, with women and other underrepresented groups continuing to face inclusion gaps. Worse, diversity/inclusion research in CS-ed has largely overlooked the online courseware\textemdashthe web pages and course materials that populate the online learning platforms\textemdashthat constitute asynchronous online CS-ed’s only mechanism of course delivery. Objective: To investigate this aspect of CS-ed’s inclusivity, we conducted a three-phase field study with online CS faculty, with three research questions: (1) whether, how, and where online CS-ed’s courseware has inclusivity bugs; (2) whether an automated tool can detect them; and (3) how online CS faculty would make use of such a tool. Method: In the study’s first phase, we facilitated online CS faculty members’ use of GenderMag (an inclusive design method) on two online CS courses to find their own courseware’s inclusivity bugs. In the second phase, we used a variant of the GenderMag Automated Inclusivity Detector (AID) tool to automatically locate a “vertical slice” of such courseware inclusivity bugs, and evaluated the tool’s accuracy. In the third phase, we investigated how online CS faculty used the tool to find inclusivity bugs in their own courseware. Results: The results revealed 29 inclusivity bugs spanning 6 categories in the online courseware of 9 online CS courses; showed that the tool achieved an accuracy of 75% at finding such bugs; and revealed new insights into how a tool could help online CS faculty uncover assumptions about their own courseware to make it more inclusive. Implications: As the first study to investigate the presence and types of cognitive- and gender-inclusivity bugs in online CS courseware and whether an automated tool can find them, our results reveal new possibilities for how to make online CS education a more inclusive virtual environment for gender-diverse students.},

keywords = {Education, Human-Computer Interaction},

pubstate = {published},

tppubtype = {inproceedings}

}

2021

Jonathan Dodge; Roli Khanna; Jed Irvine; Kin-ho Lam; Theresa Mai; Zhengxian Lin; Nicholas Kiddle; Evan Newman; Andrew Anderson; Sai Raja; Caleb Matthews; Christopher Perdriau; Margaret Burnett; Alan Fern

After-Action Review for AI (AAR/AI) Journal Article

In: ACM Transactions on Interactive Intelligent Systems, vol. 11, no. 3-4, pp. 29:1–29:35, 2021, ISSN: 2160-6455.

Abstract | Links | BibTeX | Tags: AI, Human-Computer Interaction

@article{dodge_after-action_2021,

title = {After-Action Review for AI (AAR/AI)},

author = { Jonathan Dodge and Roli Khanna and Jed Irvine and Kin-ho Lam and Theresa Mai and Zhengxian Lin and Nicholas Kiddle and Evan Newman and Andrew Anderson and Sai Raja and Caleb Matthews and Christopher Perdriau and Margaret Burnett and Alan Fern},

url = {https://doi.org/10.1145/3453173},

doi = {10.1145/3453173},

issn = {2160-6455},

year = {2021},

date = {2021-01-01},

urldate = {2021-01-01},

journal = {ACM Transactions on Interactive Intelligent Systems},

volume = {11},

number = {3-4},

pages = {29:1--29:35},

abstract = {Explainable AI is growing in importance as AI pervades modern society, but few have studied how explainable AI can directly support people trying to assess an AI agent. Without a rigorous process, people may approach assessment in ad hoc ways\textemdashleading to the possibility of wide variations in assessment of the same agent due only to variations in their processes. AAR, or After-Action Review, is a method some military organizations use to assess human agents, and it has been validated in many domains. Drawing upon this strategy, we derived an After-Action Review for AI (AAR/AI), to organize ways people assess reinforcement learning agents in a sequential decision-making environment. We then investigated what AAR/AI brought to human assessors in two qualitative studies. The first investigated AAR/AI to gather formative information, and the second built upon the results, and also varied the type of explanation (model-free vs. model-based) used in the AAR/AI process. Among the results were the following: (1) participants reporting that AAR/AI helped to organize their thoughts and think logically about the agent, (2) AAR/AI encouraged participants to reason about the agent from a wide range of perspectives, and (3) participants were able to leverage AAR/AI with the model-based explanations to falsify the agent’s predictions.},

keywords = {AI, Human-Computer Interaction},

pubstate = {published},

tppubtype = {article}

}